In the boardroom, the AI demo looks perfect.

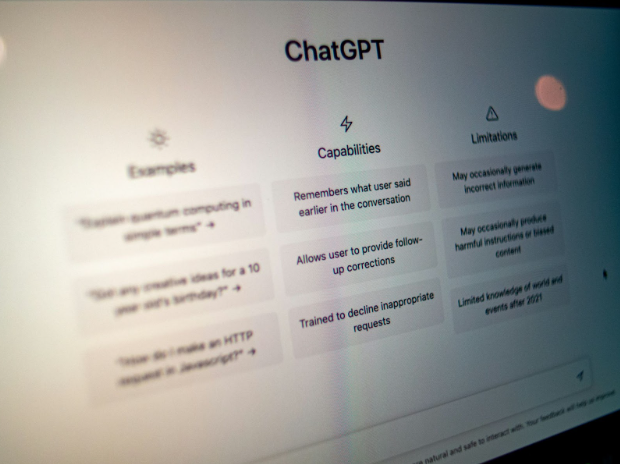

An executive types a vague question into a sleek interface. A “smart” assistant spits out a polished answer. The room nods along. Screenshots go into the earnings deck. LinkedIn posts talk about “transformational GenAI.”

Then everyone goes back to their desks and quietly opens the same public chatbot they’ve been using for a year, because the official pilot is useless in real life.

A new MIT study, “State of AI in Business 2025,” paints a brutal picture of that gap between theater and reality. According to reporting on the study, 95% of enterprise GenAI pilots fail. Not because the models are trash, but because companies are obsessed with eliminating friction instead of designing for it. The 5% that succeed are the ones that lean into resistance: messy workflows, human skepticism, compliance headaches and all.

That is the uncomfortable backdrop for a growing group of insiders who say the problem is not GenAI itself. It is the way big organizations are using it to cosplay transformation while dodging the hard work of changing how they operate.

“Most enterprises have already proven that AI works in pilots. The real challenge now is getting it out of the lab and into the business,” says Frank Palermo, COO of NewRocket. “Scaling AI is not only about selecting the right tools or platforms; it is about selecting the right use case and then aligning data, processes, and people behind it. Until companies embed AI into their workflows and governance structures, they will stay stuck in pilot mode.”

The GenAI Divide

MIT calls it the GenAI Divide. On one side, the 95%: big brands leaning on generic tools that look great in a demo, get high adoption for trivial tasks, and fall apart the second you ask them to deal with real-world context or actual stakes. On the other side, the 5%: teams that deliberately build for friction, bake in memory and learning loops, and wire GenAI into high-value workflows instead of leaving it in a sandbox.

Most companies are stuck in what MIT describes as “adoption without transformation.” Employees log into the sanctioned GenAI tool. They generate a first draft. Then they spend more time double-checking and fixing it than they would have spent doing the task themselves.

Tanmai Gopal, CEO of PromptQL, calls this the “verification tax.” When GenAI is confidently wrong, the burden shifts to workers to catch the mistakes. The more a system hallucinates, the more time humans waste policing it. That friction is unmanaged and pointless. It kills ROI.

The companies in the 5% do something different. They assume friction is inevitable and design the system around it. If the model is unsure, it abstains. It flags gaps in context. It learns from human corrections in a tight loop. That “accuracy flywheel” turns verification from unpaid labor into a feedback engine.

In other words, they treat friction as a signal, not a bug.

Shadow AI Is Already Doing the Work Leadership Won’t

MIT’s most subversive finding is not about official pilots at all. It is about the underground ones.

Even when enterprises do not buy GenAI tools, 90% of employees say they are using personal AI at work. Only around 40% of companies actually have formal GenAI subscriptions, but workers are already piping documents into whatever consumer tool helps them survive another day.

At one Fortune 500 insurer, MIT reports, the official GenAI pilot looked flawless in the boardroom but collapsed in the field because it could not retain context from one claim to the next. Meanwhile, employees were quietly using personal AI to speed up claims processing in ways that were saving the company millions a year and cutting agency spend by 30%.

On paper, the pilot failed. In practice, shadow GenAI was already generating ROI.

This is the part most corporate AI strategies refuse to look at. The real transformation is not happening in the polished prototypes. It is happening in the gray zone where workers bend the rules to get actual work done.

“The organizations that succeed will be the ones that make AI part of everyday decision-making and continuous improvement,” Palermo says. That means facing the awkward fact that many employees are already doing that on their own, without permission.

Friction as Design, Not Accident

The MIT study breaks GenAI friction into three types: human, organizational and technical. Human friction is fear, distrust and the very reasonable instinct to double-check machine output. Organizational friction is politics, incentives and legacy process. Technical friction is data quality, system integration and all the ugly edge cases that never make it into the demo.

Most pilots try to erase those frictions. They roll out a generic interface, avoid touching anyone’s job description, and pretend the data is cleaner than it is. The result is a smooth proof-of-concept that collapses the moment it meets reality.

The 5% that succeed do almost the opposite. They:

- Measure absorption, not logins: Which workflows have been redesigned around GenAI, not how many people tried the tool once.

- Fund the memory layer: If the system cannot remember context across interactions, it cannot scale.

- Redesign vendor contracts around learning milestones instead of seat licenses.

- Channel shadow AI instead of banning it, turning bottom-up hacks into official features.

- Treat spikes of resistance as design input, not grounds to roll everything back.

Palermo’s version of that same playbook lives at the workflow level. His argument is that enterprises are obsessed with models and platforms when they should be obsessed with where AI actually lands in the day-to-day.

“Scaling AI is not only about selecting the right tools or platforms; it is about selecting the right use case and then aligning data, processes, and people behind it,” he says.

Getting Out of Pilot Purgatory

If there is a common thread between MIT’s numbers and Palermo’s warning, it is this: pilots are too easy.

They are easy because they avoid the fight. They do not challenge who owns which process. They do not force uncomfortable conversations with compliance or legal. They do not admit that a “smart” system with no memory is just theater.

The next phase of enterprise AI will belong to the companies that are willing to get bruised. The ones that argue about workflows, rewrite contracts, and formalize the shadow tools their workers already rely on.

The rest can keep polishing their demos. Just do not confuse the smoothness of the pilot with proof that anything real is happening underneath.

Because if MIT is right, 95% of those shiny GenAI projects are already dead on arrival. The friction the other 5% embrace is not what slows them down. It is what finally gets them out of the lab.

Source: MIT Finds 95% Of GenAI Pilots Fail Because Companies Avoid Friction